Published Apr 16, 2026, 3:00 PM EDT

Oluwademilade is a tech enthusiast with over five years of writing experience. He joined the MUO team in 2022 and covers various topics, including consumer tech, iOS, Android, artificial intelligence, hardware, software, and cybersecurity. In addition to writing at MUO, his work has appeared on HowtoGeek, Cryptoknowmics, TechNerdiness, and SlashGear.

Oluwademilade attended the University of Ibadan in Nigeria, earning a medical degree from the College of Medicine. Excelling in public service, Oluwademilade was honored with the title of Global Action Ambassador by a student organization affiliated with the United Nations. He received this designation in Kuala Lumpur, Malaysia, in recognition of his efforts to make a positive global impact in 2020

In his free time, Oluwademilade enjoys testing new AI apps and features, troubleshooting tech problems for family and friends, learning new coding languages, and traveling to new places whenever possible.

Every time you send a prompt to ChatGPT, Gemini, and the likes, it travels across the internet, lands on a server somewhere, and becomes part of a system you don’t really see. That trade-off is usually worth it for the reason of cloud models being faster, smarter, and easier to use.

But running a small language model locally on my phone changed what I use AI for. The experience is more private, and sometimes more practical than I expected. It’s not as powerful as cloud AI, but for certain things, it’s actually the better tool.

These are the most useful ways I’ve ended up using a local LLM on my phone.

Related

Related

I use it as a thinking partner

For questions I don’t want leaving my phone

Credit: Oluwademilade Afolabi / MakeUseOf

Credit: Oluwademilade Afolabi / MakeUseOf

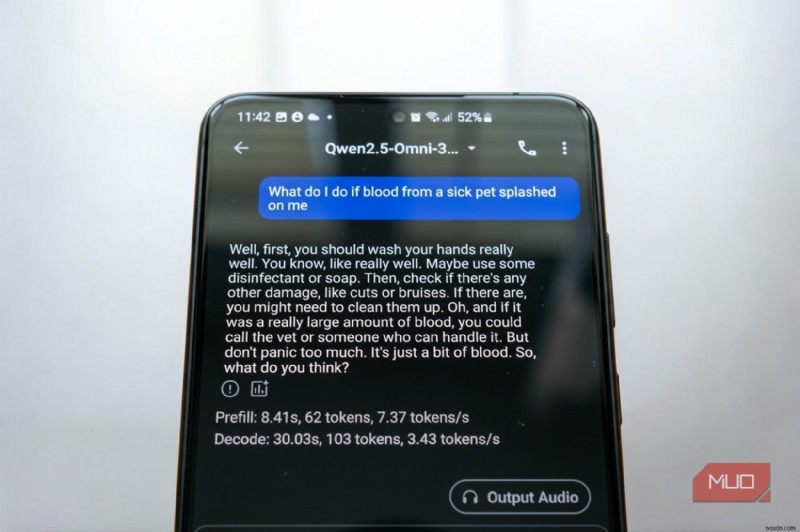

There’s a certain kind of question that gives you pause before typing it into ChatGPT or even Google. Not because it’s inappropriate, but because it's personal enough that sending it to a server tied to your account doesn't feel great. What counts as “too personal” will differ from person to person, but everyone seems to have that invisible line.

Those are the questions I’ve started taking to a local model instead. The conversation stays on my hardware, and if I want to be extra cautious, I can flip my phone into Airplane Mode and have a truly air-gapped conversation. At that point, it really is just you and the model, with no connection to the outside world.

This changes how I use AI. I’m more willing to think out loud, test half-formed ideas, or ask questions I’d normally keep to myself.

MNN

I dump messy notes into it

And get some structure back

Credit: Oluwademilade Afolabi / MakeUseOf

Credit: Oluwademilade Afolabi / MakeUseOf

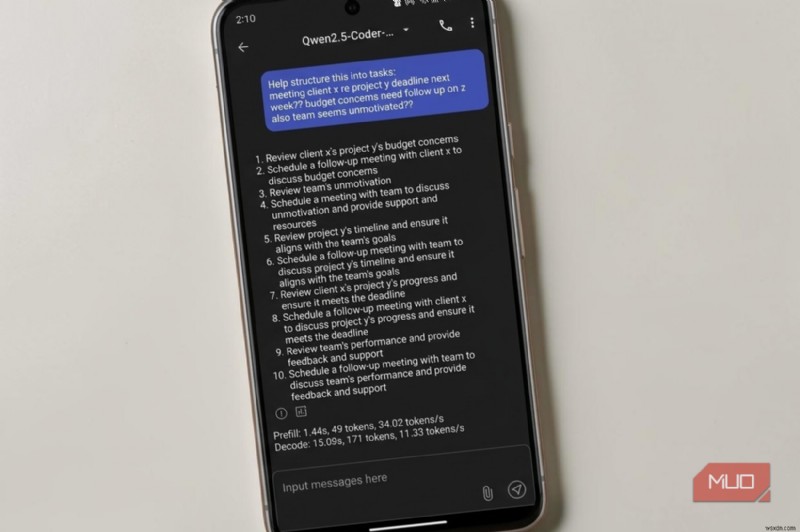

I take a lot of notes, and frankly, most of them are a mess. It includes speech-to-text transcripts that loop back on themselves, bullet points with zero context, half-finished thoughts that made perfect sense in the moment and none at all later. My old workflow involved a lot of staring, shuffling lines around, and slowly trying to reconstruct what I meant.

Now I paste those brain dumps straight into a local model and ask it to organize them. It can pull out the thread, figure out what I was circling around, and return something cleaner to build from. Not all polished, but coherent enough to move forward.

This works especially well for notes that feel too raw to send anywhere. Because everything stays on-device, I don’t hesitate to paste in material with real names, figures, or personal context. As I mentioned earlier, there’s no mental pause about where the text is going, since it never leaves the machine. It is exactly why I switched everything to local AI and stopped sending my documents to the cloud.

I run quick code checks

When I just need to sanity check the logic

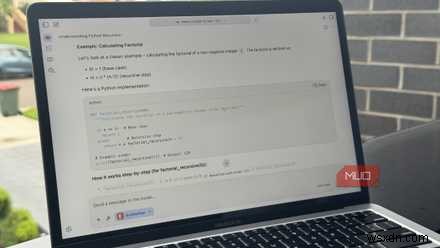

Proprietary logic, internal tooling, client-specific configs — these are plenty of situations where pasting code into a cloud model is borderline bad idea, regardless of what the terms of service promise. A local LLM running on my phone has become a lightweight fallback when I’m away from my laptop. Just as there are several interesting ways to use a local LLM with MCP tools on a desktop, I can describe an error, paste a small function, or just ask for a plain-English explanation of what a chunk of logic is doing directly from my phone.

Related

Related

It’s not a replacement for a proper IDE, not even close, but it fills the gaps. This works best with smaller snippets, like a couple of hundred lines at most. Within that range, even modest on-device models are capable at explaining logic, spotting obvious mistakes, or suggesting cleaner approaches.

I use it as a zero-pressure language tutor

Practice without streaks, scores, or pressure

Credit: Oluwademilade Afolabi / MakeUseOf

Credit: Oluwademilade Afolabi / MakeUseOf

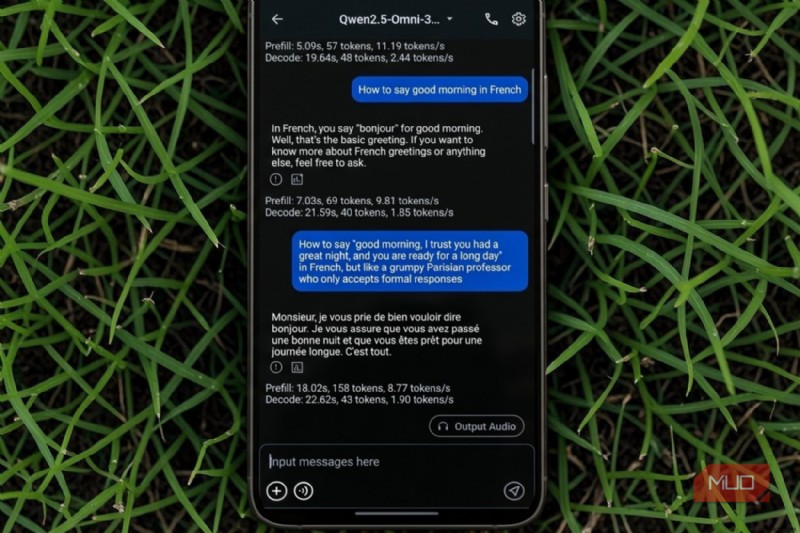

Cloud-based language apps often feel more like mobile games than learning tools. They track streaks, nudge you with notifications, and sprinkle in ads to keep you engaged. A local LLM does none of that.

I’ve been using it to practice French and Spanish in a more free form way. Taking inspiration from the trick of using a Kindle and ChatGPT as a shortcut to learning a new language, I can ask awkward grammar questions, request roleplay scenarios, or just hold casual conversations without worrying about mistakes. There’s no scoring system and no sense of being evaluated.

Because it runs locally, it also works offline. I can practice during a flight, on spotty hotel Wi-Fi, or anywhere my connection is unreliable. That makes it easier to squeeze in short sessions without planning around connectivity.

I point my camera at things and ask...

What is that?

Credit: Raghav Sethi/MakeUseOf

Credit: Raghav Sethi/MakeUseOf

Some local models can handle images as well as text (they are actually called multimodal models), which opens up a practical set of uses. I usually use them to summarize whiteboards, interpret handwritten notes, and extract key points from quick photos.

It’s also helpful for everyday situations. I’ve snapped ingredient labels to double-check allergens, photographed product packaging to understand unfamiliar terms, and taken pictures of plants just to get a rough identification. None of this requires an internet connection when the model runs fully on-device.

The results aren’t always perfect. Smaller models can hallucinate details, especially when the image is blurry or cluttered. Even so, it’s often good enough for quick context or a second opinion, which is usually all I need.

A smaller model, but a different kind of usefulness

MNN Chat, developed by Alibaba as an open-source project, has become my go-to for these kinds of tasks because of how well it squeezes performance out of mobile hardware. It single-handedly proves that you can (and should) run a tiny LLM on your Android phone.

That said, running a local LLM on your phone isn’t a replacement for cloud AI. The bigger models still have the edge when it comes to heavy lifting, complex reasoning, coding, deep research, all of that. But that’s not really the point; local models fill a different role. They’re private, always within reach, and quite useful for smaller, everyday tasks.