Published Apr 19, 2026, 10:00 AM EDT

Afam's experience in tech publishing dates back to 2018, when he worked for Make Tech Easier. Over the years, he has built a reputation for publishing high-quality guides, reviews, tips, and explainer articles, covering Windows, Linux, and open source tools. His work has been featured on top websites, including Technical Ustad, Windows Report, Guiding Tech, Alphr, and Next of Windows.

He holds a first degree in Computer Science and is a strong advocate for data privacy and security, with several tips, videos, and tutorials on the subject published on the Fuzo Tech YouTube channel.

When he is not working, he loves to spend time with his family, cycling, or tending to his garden.

I've liked using Linux for its stability. However, my home server, which runs 24/7, seems to push it to its limits, and I've seen it hang at some of the worst possible moments. At times, I've had to step in and do a manual reboot so that my remote box comes back online.

If this is a scenario you face, Linux has a built-in system that is perfect for these kinds of situations. Combining it with systemd's service recovery gives me an efficient two-layer crash recovery mechanism that doesn't require physical intervention.

Related

Related

Linux already has a built-in recovery mechanism

The watchdog timer that protects your system

Linux has a built-in feature called watchdog, which works on the principle that the system regularly sends signals showing it's still active. The moment it doesn't receive a signal from the system, the watchdog assumes there is a problem and triggers a reboot. This feature has existed as far back as the mid-1990s on Linux and has been used mainly on systems where uptime is non-negotiable, like servers and embedded systems.

On some systems, the watchdog is exposed through the /dev/watchdog device file, while on others, it may be /dev/watchdog0. A process has to write to this file to reset the countdown timer. If the process stops writing, it typically means the system is frozen or resources have been consumed by a runaway process. In such a case, the timer expires, and that's how the reboot gets triggered.

There are two kinds of watchdogs: hardware and software (softdog). The first could be a hardware mechanism on the motherboard. It's always capable of system resets, even at times when the kernel is completely locked up. The next is the software version that runs inside the kernel and doesn't need any extra hardware. This version, however, doesn't save you if there's a kernel crash.

Type

Needs dedicated hardware

Survives a hard kernel crash

Best suited for

Hardware watchdog

Yes

Yes

Servers, always-on critical systems

Software (softdog)

No

No

Home servers, VMs, general-purpose rigs

The software watchdog is great for the common freezes that most setups face, like load spikes, memory exhaustion, and runaway processes. However, this feature is disabled by default, and misconfiguring it can cause unnecessary and repeated system reboots. That said, it's become one of my favorite hidden Linux features.

Setting up automatic crash recovery in minutes

A practical watchdog setup that actually works

softdog already works on almost all Linux distros, so you don't need new hardware. Your starting point is loading the module with this command:

sudo modprobe softdog

To ensure that softdog persists after reboots, open the file at /etc/modules (Debian/Ubuntu), add softdog on its own line, and save it. Now install the watchdog daemon and enable it with the commands below:

sudo apt install watchdog

sudo systemctl enable --now watchdog

With this done, it's time to open /etc/watchdog.conf and focus on some important settings:

Setting

What it controls

Practical starting point

interval

How often the system checks in

10 seconds

max-load-1

Load average ceiling before reboot

~6× CPU core count

min-memory

Free memory floor before reboot

~512 pages (~2MB)

The load average for max-load-1 is one minute. This value represents the number of processes that actively compete for CPU time on your device. This implies that if a 4-core machine has a load of 4.0, every core is fully occupied. It's safer to use 6x your core count so that your system has headroom for bursts, which may be legitimate before a lockup.

Also, note that min-memory is specified in memory pages, not megabytes. One page is typically 4KiB on x86_64 systems. Going by this, 512 pages will be about 2MB of free memory.

Once you have completed these configurations, run the command systemctl status watchdog to verify the daemon is running, and the command journalctl -u watchdog allows you to review its activity.

Stopping the watchdog service won't trigger a reboot—the daemon closes /dev/watchdog cleanly on exit, which safely disarms the timer. To actually test that the watchdog will reboot your system, you need to simulate a real failure condition, such as a sustained load spike.

Not every crash needs a reboot

Let systemd fix broken services in seconds

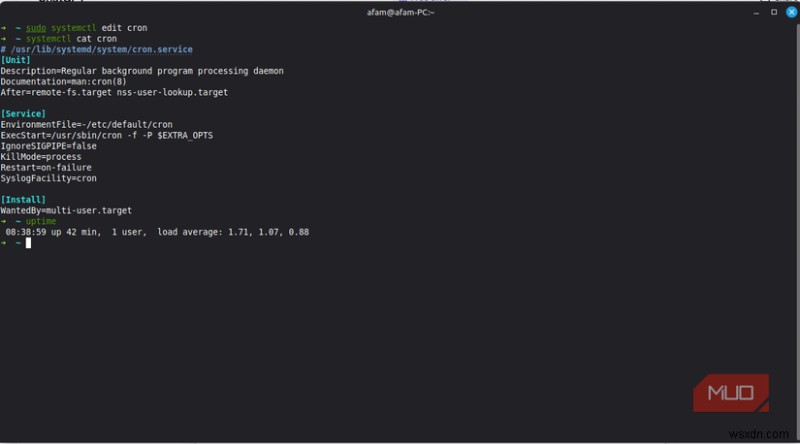

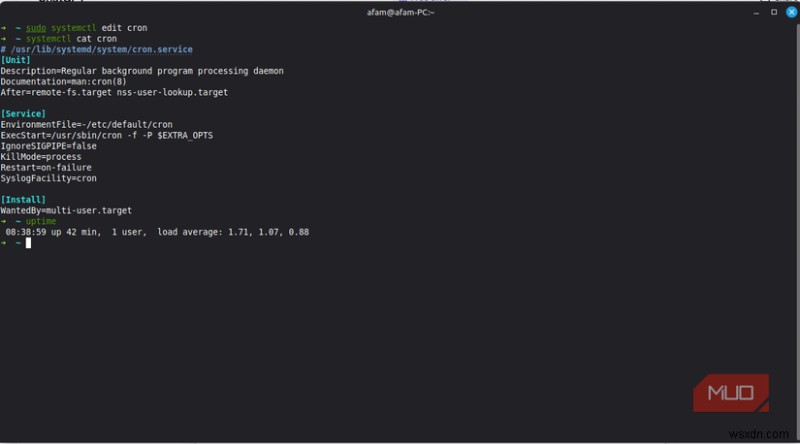

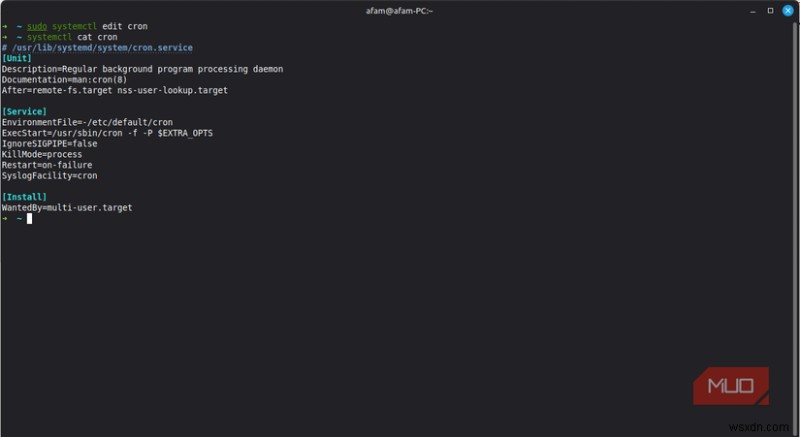

There are several failures that systemd can handle in seconds that don't require rebooting the system. An example could be a service that crashes, exits unexpectedly, or stops responding. You can apply checks so that not everything triggers a reboot without touching the original unit. Run the command below, adding the service name:

sudo systemctl edit

Then add the following:

[Service]

Restart=on-failure

RestartSec=5

Restart=on-failure ensures a restart happens only when the service exits with an error code, and RestartSec=5 is a short delay before actual restarts to prevent a rapid-fire restart loop.

Combining StartLimitIntervalSec and StartLimitBurst will prevent a broken service from restarting indefinitely. They are essential in stopping a crash loop, but only work for services that run within systemd.

Two layers of recovery that cover almost every failure

Neither the watchdog nor systemd Service Management is complete on its own. However, used together, they can handle almost anything.

Failure type

Recovery layer

Expected outcome

Service exits with an error

systemd (Restart=on-failure)

Service restarts within seconds

Service exits cleanly but shouldn't

systemd (Restart=always)

Service restarts within seconds

Full system freeze or load spiral

watchdog daemon

Automatic reboot, no manual step

systemd is well-suited to catching and restarting individual service failures, and the watchdog sits above it to watch the entire system and trigger a restart when something goes wrong beyond the limits of systemd.

This combination means you don't have to be available every time something goes wrong, and it makes server management a more pleasant undertaking. Also, consider learning specific Linux commands that help you fix most system problems.